[HTTP APIs & REST] Organizing an HTTP API Based on the REST Principles

With this post, I’m continuing publishing the v2 of my book dedicated to APIs. If you like this book, please rate it on GitHub, Amazon, or Goodreads and

Chapter 37. Organizing an HTTP API Based on the REST Principles

Now let's discuss the specifics: what does it mean exactly to “follow the protocol's semantics” and “develop applications in accordance with the REST architectural style”? Remember, we are talking about the following principles:

Operations must be stateless

Data must be marked as cacheable or non-cacheable

There must be a uniform interface of communication between components

Network systems are layered.

We need to apply these principles to an HTTP-based interface, adhering to the letter and spirit of the standard:

The URL of an operation must point to the resource the operation is applied to, serving as a cache key for

GEToperations and an idempotency key forPUTandDELETEoperations.HTTP verbs must be used according to their semantics.

Properties of the operation, such as safety, cacheability, idempotency, as well as the symmetry of

GET/PUT/DELETEmethods, request and response headers, response status codes, etc., must align with the specification.

NB: we're deliberately skipping many nuances of the standard:

a caching key might be composite (i.e., include request headers) if the response contains the

Varyheader.an idempotency key might also be composite if the request contains the

Rangeheader.if there are no explicit cache control headers, the caching policy will not be defined by the HTTP verb alone. It will also depend on the response status code, other request and response headers, and platform policies.

To keep the chapter size reasonable, we will not discuss these details, but we highly recommend reading the standard thoroughly.

Let's talk about organizing HTTP APIs based on a specific example. Imagine an application start procedure: as a rule of thumb, the application requests the current user profile and important information regarding them (in our case, ongoing orders), using the authorization token saved in the device's memory. We can propose a straightforward endpoint for this purpose:

GET /v1/state HTTP/1.1

Authorization: Bearer <token>

→

HTTP/1.1 200 OK

{ "profile", "orders" }

Upon receiving such a request, the server will check the validity of the token, fetch the identifier of the user user_id, query the database, and return the user's profile and the list of their orders.

This simple monolith API service violates several REST architectural principles:

There is no obvious solution for caching responses on the client side (the order state is being frequently updated and there is no sense in saving it)

The operation is stateful as the server must keep tokens in memory to retrieve the user identifier, to which the requested data is bound.

The system comprises a single layer, and therefore, the question of a uniform interface is meaningless.

While scaling the backend is not a problem, this approach works. However, with the audience and the service's functionality (and the number of software engineers working on it) growing, we sooner or later face the fact that this monolith architecture costs too much in overhead charges. Imagine we decided to decompose this single backend into four microservices:

Service A checks authentication tokens

Service B stores user accounts

Service C stores orders

Gateway service D routes incoming requests to other microservices.

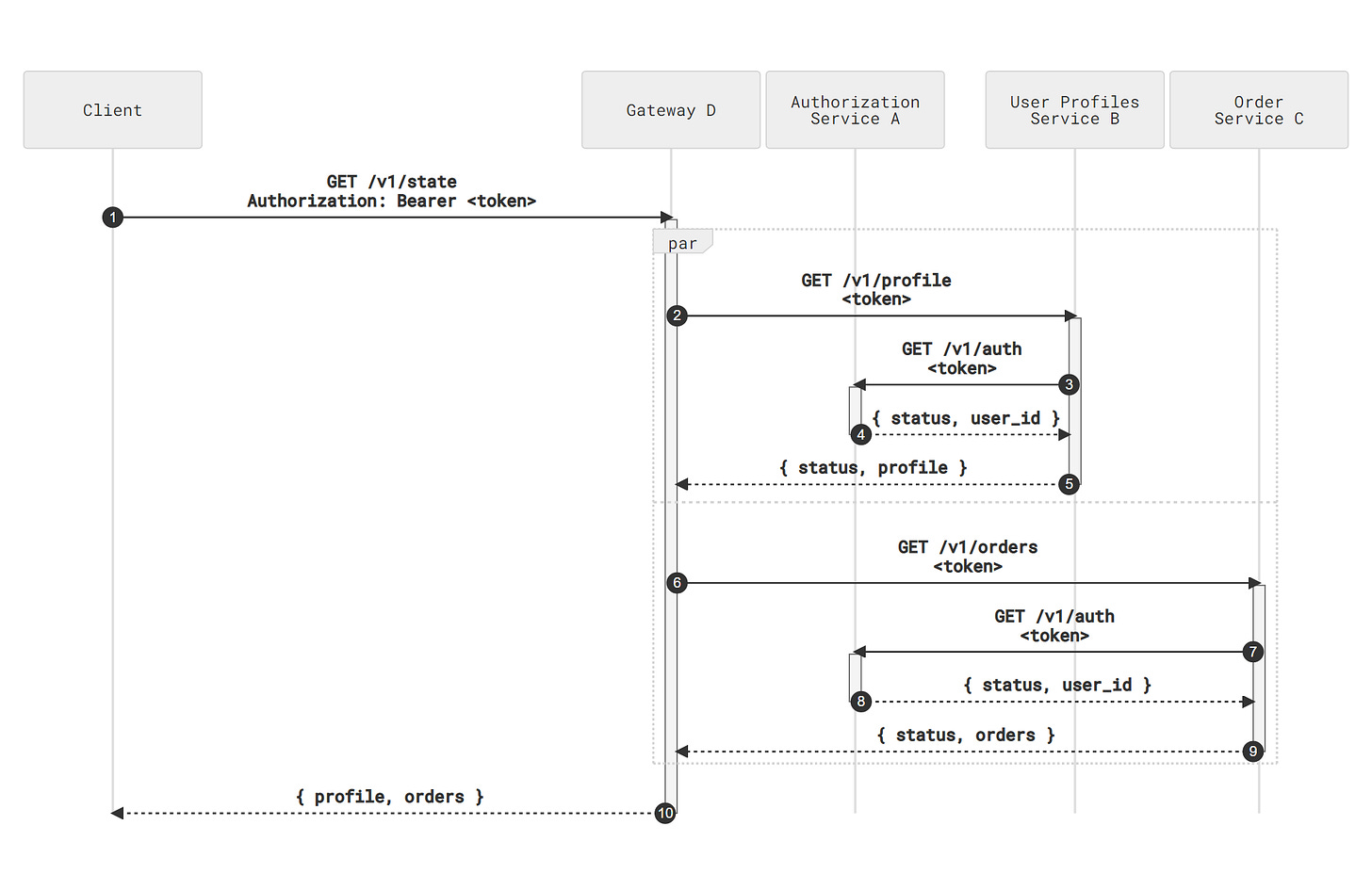

This implies that a request traverses the following path:

Gateway D receives the request and sends it to both Service C and Service D.

C and D call Service A to check the authentication token (passed as a proxied

Authorizationheader or as an explicit request parameter) and return the requested data — the user's profile and the list of their orders.Service D merges the responses and sends them back to the client.

The original microservice mesh. Click to enlarge

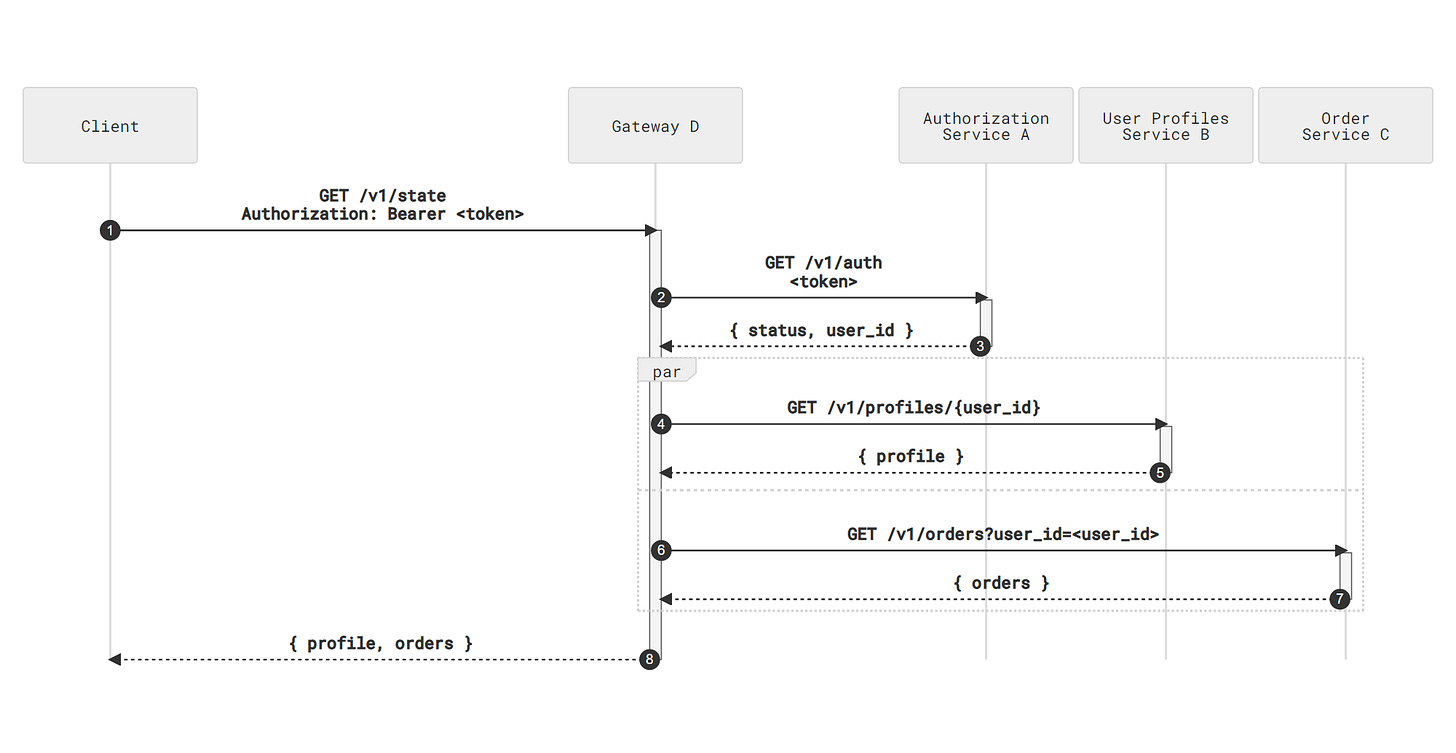

It is quite obvious that in this setup, we put excessive load on the authorization service as every nested microservice now needs to query it. Even if we abolish checking the authenticity of internal requests, it won't help as services B and C can't know the identifier of the user. Naturally, this leads to the idea of propagating the once-retrieved user_id through the microservice mesh:

Gateway D receives a request and exchanges the token for

user_idthrough service AGateway D queries service B:

GET /v1/profiles/{user_id}

and service C:

GET /v1/orders?user_id=<user id>

Step 2. Adding explicit user identifiers. Click to enlarge

NB: we used the /v1/orders?user_id notation and not, let's say, /v1/users/{user_id}/orders, because of two reasons:

The orders service stores orders, not users, and it would be logical to reflect this fact in URLs

If in the future, we require allowing several users to share one order, the

/v1/orders?user_idnotation will better reflect the relations between entities.We will discuss organizing URLs in HTTP APIs in more detail in the next chapter.

Now both services A and B receive the request in a form that makes it redundant to perform additional actions (identifying the user through service A) to obtain the result. By doing so, we refactored the interface allowing a microservice to stay within its area of responsibility, thus making it compliant with the stateless constraint.

Let us emphasize that the difference between stateless and stateful approaches is not clearly defined. Microservice B stores the client state (i.e., the user profile) and therefore is stateful according to Fielding's dissertation. However, we rather intuitively agree that storing profiles and just checking token validity is a better approach than doing all the same operations plus having the token cache. In fact, we rather embrace the logical principle of separating abstraction levels which we discussed in detail in the corresponding chapter:

Microservices should be designed to clearly outline their responsibility area and to avoid storing data belonging to other abstraction levels

External entities should be just context identifiers, and microservices should not interpret them

If operations with external data are unavoidable (for example, the authority making a call must be checked), the operations must be organized in a way that reduces them to checking the data integrity.

In our example, we might get rid of unnecessary calls to service A in a different manner — by using stateless tokens, for example, employing the JWT standard. Then services B and C would be capable of deciphering tokens and extracting user identifiers on their own.

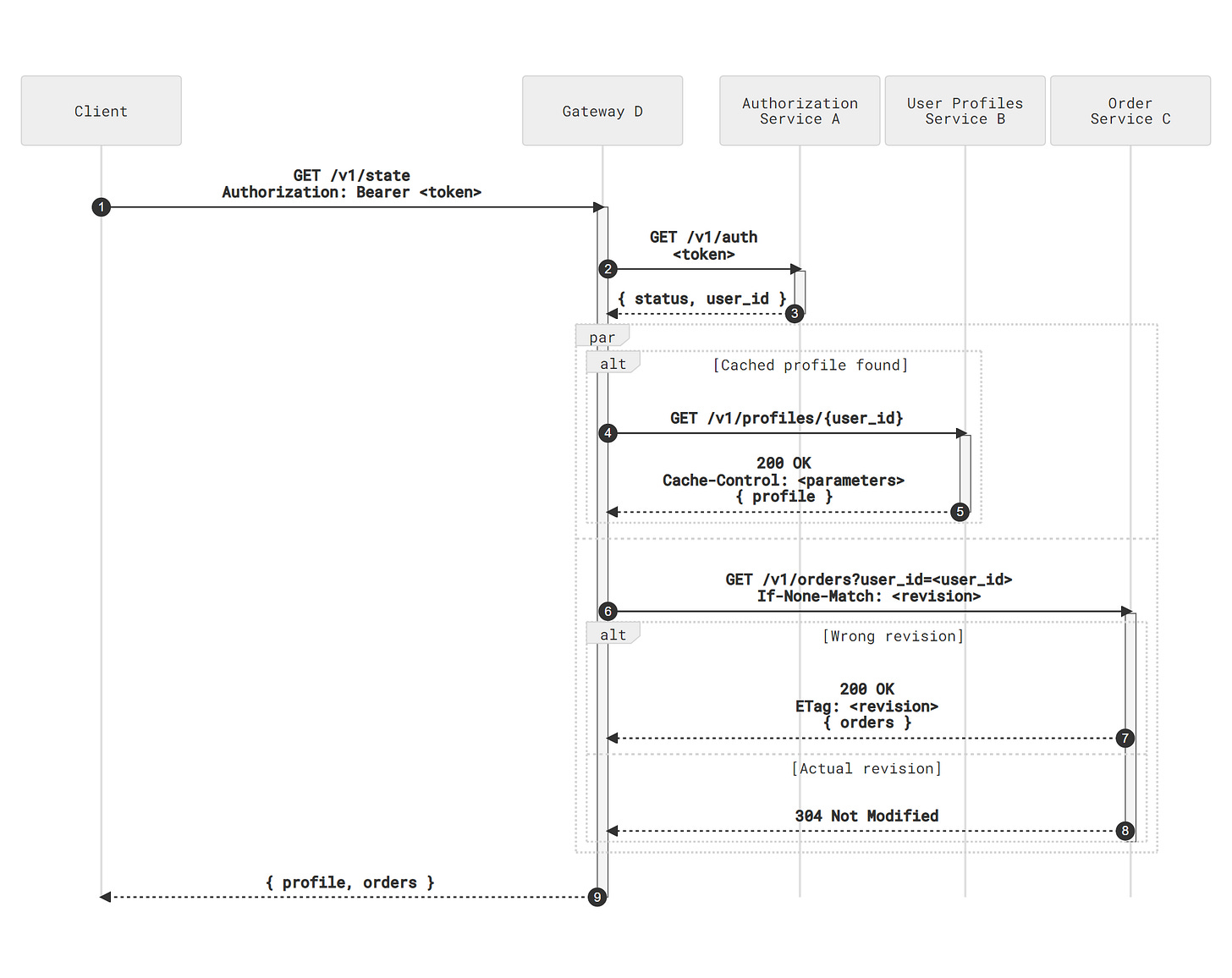

Let us take a step further and notice that the user profile rarely changes, so there is no need to retrieve it each time as we might cache it at the gateway level. To do so, we must form a cache key which is essentially the client identifier. We can do this by taking a long way:

Before requesting service B, generate a cache key and probe the cache

If the data is in the cache, respond with the cached snapshot; if it is not, query service B and cache the response.

Alternatively, we can rely on HTTP caching which is most likely already implemented in the framework we use or easily added as a plugin. In this scenario, gateway D requests the /v1/profiles/{user_id} resource in service B, retrieves the data alongside the cache control headers, and caches it locally.

Now let's shift our attention to service C. The results retrieved from it might also be cached. However, the state of an ongoing order changes more frequently than the user's profiles, and returning an invalid state might entail objectionable consequences. However, as discussed in the “Synchronization Strategies” chapter, we need optimistic concurrency control (i.e., the resource revision) to ensure the functionality works correctly, and nothing could prevent us from using this revision as a cache key. Let service C return a tag describing the current state of the user's orders:

GET /v1/orders?user_id=<user_id> HTTP/1.1

→

HTTP/1.1 200 OK

ETag: <revision>

…

Then gateway D can be implemented following this scenario:

Cache the response of

GET /v1/orders?user_id=<user_id>using the URL as a cache keyUpon receiving a subsequent request:

Fetch the cached state, if any

Query service C passing the following parameters:

GET /v1/orders?user_id=<user_id> HTTP/1.1

If-None-Match: <revision>

If service C responds with a

304 Not Modifiedstatus code, return the cached stateIf service C responds with a new version of the data, cache it and then return it to the client.

Step 2. Adding server-side caches. Click to enlarge

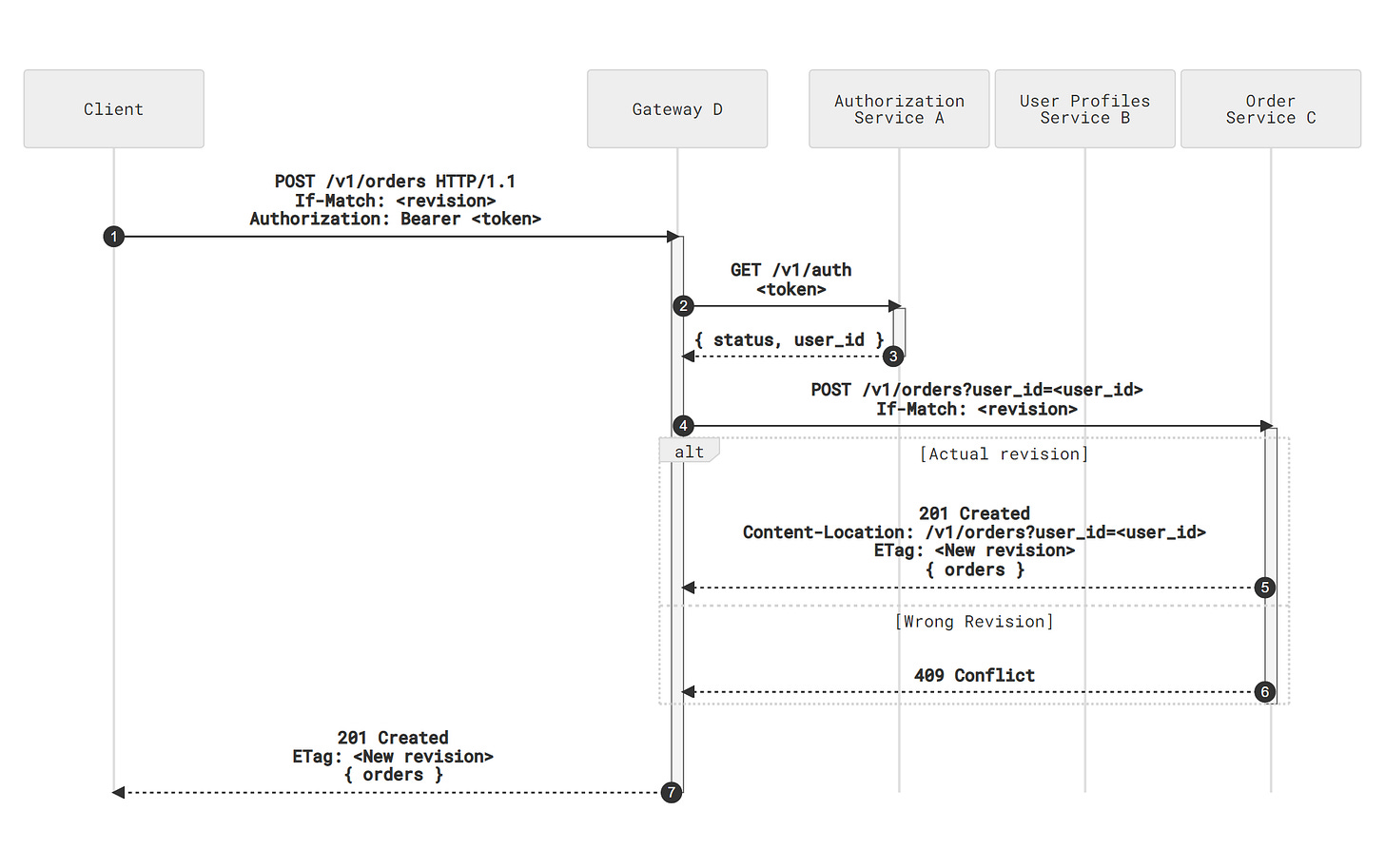

By employing this approach [using ETags to control caching], we automatically get another pleasant bonus. We can reuse the same data in the order creation endpoint design. In the optimistic concurrency control paradigm, the client must pass the actual revision of the orders resource to change its state:

POST /v1/orders HTTP/1.1

If-Match: <revision>

Gateway D will add the user's identifier to the request and query service C:

POST /v1/orders?user_id=<user_id> HTTP/1.1

If-Match: <revision>

If the revision is valid and the operation is executed, service C might return the updated list of orders alongside the new revision:

HTTP/1.1 201 Created

Content-Location: /v1/orders?user_id=<user_id>

ETag: <new revision>

{ /* The updated list of orders */ }

and gateway D will update the cache with the current data snapshot.

Creating a new order. Click to enlarge

Importantly, after this API refactoring, we end up with a system in which we can remove gateway D and make the client itself perform its duty. Nothing prevents the client from:

Storing

user_idon its side (or retrieving it from the token, if the format allows it) as well as the last knownETagof the order listInstead of a single

GET /v1/staterequest performing two HTTP calls (GET /v1/profiles/{user_id}andGET /v1/orders?user_id=<user_id>) which might be multiplexed thanks to HTTP/2Caching the result on its side using standard libraries and/or plugins.

From the perspective of implementing services B and C, the presence of a gateway affects nothing, with the exception of security checks. Vice versa, we might add a nested gateway to, let's say, split order storage into “cold” and “hot” ones, or make either service B or C work as a gateway themselves.

If we refer to the beginning of the chapter, we will find that we designed a system fully compliant with the REST architectural principles:

Requests to services contain all the data needed to process the request

The interaction interface is uniform to the extent that we might freely transfer gateway functions to the client or another intermediary agent

Every resource is marked as cacheable

Let us reiterate once more that we can achieve exactly the same qualities with RPC protocols by designing formats for describing caching policies, resource versions, reading and modifying operation metadata, etc. However, the author of this book would firstly, express doubts regarding the quality of such a custom solution and secondly, emphasize the considerable amount of code needed to be written to realize all the functionality stated above.

Authorizing Stateless Requests

Let's elaborate a bit on the no-authorizing service solution (or, to be more precise, the solution with the authorizing functionality being implemented as a library or a local daemon inside services B, C, and D) with all the data embedded in the authorization token itself. In this scenario, every service performs the following actions:

Receives a request like this:

GET /v1/profiles/{user_id}

Authorization: Bearer <token>

Deciphers the token and retrieves a payload. For example, in the following format:

{

// The identifier of a user

// who owns the token

"user_id",

// Token creation timestamp

"iat"

}

Checks that the permissions stated in the token payload match the operation parameters (in our case, compares

user_idpassed as a query parameter withuser_idencrypted in the token itself) and decides on the validity of the operation.

The necessity to compare two user_ids might appear illogical and redundant. However, this opinion is invalid; it originates from the widespread (anti)pattern we started the chapter with, namely the stateful determining of operation parameters:

GET /v1/profile

Authorization: Bearer <token>

Such an endpoint effectively performs all three access control operations in one place:

Authenticates the user by searching the passed token in the token storage

Identifies the user by retrieving the identifier bound to the token

Authorizes the operation by enriching its parameters and implicitly stipulating that users always have access to their own data.

The problem with this approach is that splitting these three operations is not possible. Let us remind the reader about the authorization options we described in the “Authenticating Partners and Authorizing API Calls” chapter: in a complex enough system we will have to solve the problem of allowing user X to make actions on behalf of user Y. For example, if we sell the functionality of ordering beverages as a B2B API, the CEO of the partner company might want to control (personally or programmatically) the orders the employees make.

In the case of the “triple-stacked” access checking endpoint, our only option is implementing a new endpoint with a new interface. With stateless tokens, we might do the following:

Include in the token a list of the users that the token allows access to:

{

// The list of identifiers

// of user profiles accessible

// with the token

"user_ids",

// Token creation timestamp

"iat"

}

Modify the permission-checking procedure (i.e., make changes in the code of a local SDK or a daemon) so that it allows performing the action if the

user_idquery parameter value is included in theuser_idslist from the token payload.This approach might be further enhanced by introducing granular permissions to carry out specific actions, access levels, additional ACL service calls, etc.

Importantly, the visible redundancy of the format ceases to exist: user_id in the request is now not duplicated in the token payload as these identifiers carry different semantics: on which resource the operation is performed against who performs it. The two often coincide, but this coincidence is just a special case. Unfortunately, this doesn't negate the fact that it's quite easy simply to forget to implement this unobvious check in the code. This is the way.

This is Chapters 37 of “The API” book being written by Sergey Konstantinov. I also have a book on the history of beer and historical beer styles, a Telegram channel on interesting classical music recordings, a travel photo blog on Unsplash, and a website with ranking fantasy & science fiction novels based on awards they received.